|

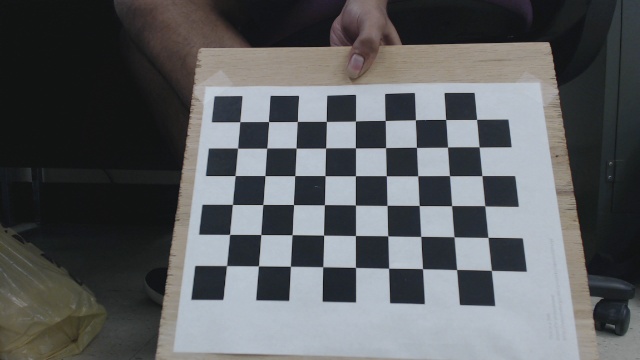

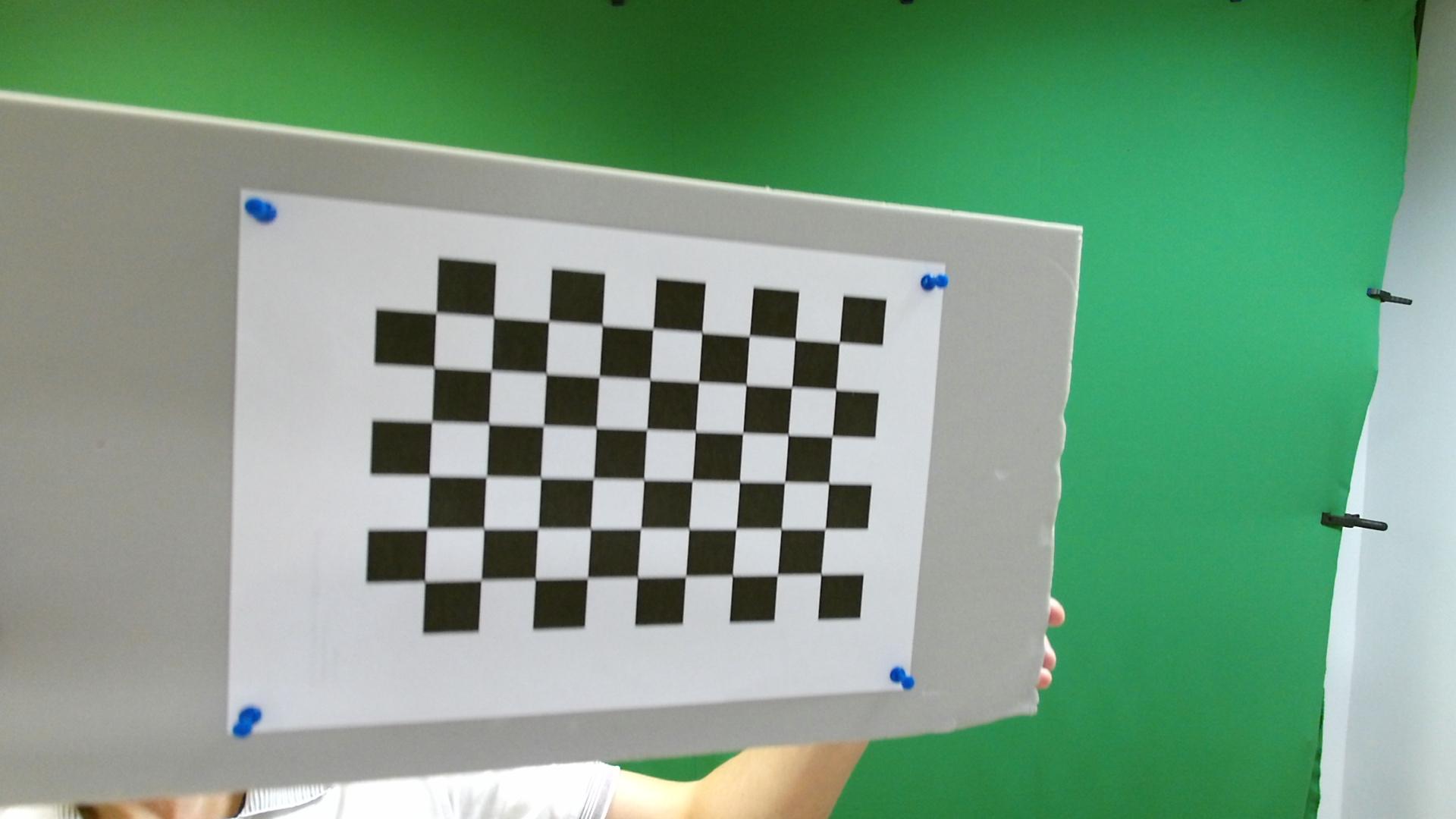

CalibrateCamera (objectPoints, imagePoints, imageSize ] ] ] ] ) → retval, cameraMatrix, distCoeffs, rvecs, tvecs ¶ C: double cvCalibrateCamera2 (const CvMat* objectPoints, const CvMat* imagePoints, const CvMat* pointCounts, CvSize imageSize, CvMat* cameraMatrix, CvMat* distCoeffs, CvMat* rvecs=NULL, CvMat* tvecs=NULL, int flags=0 ) ¶ Python: cv. C++: double calibrateCamera (InputArrayOfArrays objectPoints, InputArrayOfArrays imagePoints, Size imageSize, InputOutputArray cameraMatrix, InputOutputArray distCoeffs, OutputArrayOfArrays rvecs, OutputArrayOfArrays tvecs, int flags=0 ) ¶ Python: cv2. At the same time, newer features such as YOLO, Aruco marker detection and others have been brought in for you to play with.Finds the camera intrinsic and extrinsic parameters from several views of a calibration pattern. Most of the features available in the ImagePack made it into VL.OpenCV with the exception of Structured Light, Feature Detection, StereoCalibrate and some of the Contour detection functionality.For mine, the black square was 3.2 centimeters. Set the sz according to the size of the board you printed and measured in step 1. Run the opencvinteractive-calibration app from the directory that you stored the configuration xml file. Double rms stereoCalibrate (objectPoints, imagePoints0, imagePoints1,Step 3: Interactive calibration.

Stereocalibrate Opencv Code In PythonWe share the code in Python and C++ for hands-on experience.In the new interface it is a vector of vectors of calibration pattern points in the calibration pattern coordinate space. We explain depth perception using stereo camera and OpenCV. In this post, we discuss classical methods for stereo matching and for depth perception. Then, the vectors will be different. Although, it is possible to use partially occluded patterns, or even different patterns in different views. If the same calibration pattern is shown in each view and it is fully visible, all the vectors will be the same. Steinway serial number lookupimageSize – Size of the image used only to initialize the intrinsic camera matrix. Usually, all the elements are the same and equal to the number of feature points on the calibration pattern. Each element is the number of points in each view. pointCounts – In the old interface this is a vector of integers, containing as many elements, as the number of views of the calibration pattern. ImagePoints.size() and objectPoints.size() and imagePoints.size() must be equal to objectPoints.size() for each i.

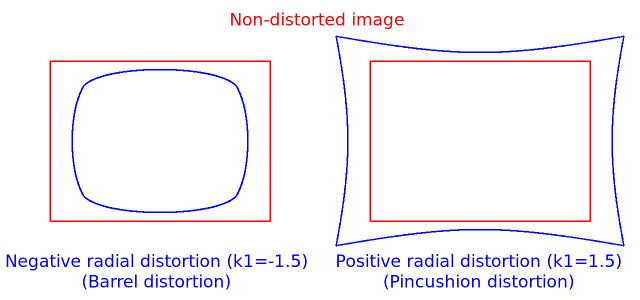

To provide the backward compatibility, this extra flag should be explicitly specified to make the calibration function use the rational model and return 8 coefficients. CV_CALIB_RATIONAL_MODEL Coefficients k4, k5, and k6 are enabled. Otherwise, it is set to 0. If CV_CALIB_USE_INTRINSIC_GUESS is set, the coefficient from the supplied distCoeffs matrix is used. CV_CALIB_FIX_K1.,CV_CALIB_FIX_K6 The corresponding radial distortion coefficient is not changed during the optimization. CV_CALIB_ZERO_TANGENT_DIST Tangential distortion coefficients are set to zeros and stay zero. That may be achieved by using anObject with a known geometry and easily detectable feature points.Such an object is called a calibration rig or calibration pattern,And OpenCV has built-in support for a chessboard as a calibrationFindChessboardCorners() ). The coordinates of 3D object points and their corresponding 2D projectionsIn each view must be specified. The algorithm is based on and. Run the global Levenberg-Marquardt optimization algorithm to minimize the reprojection error, that is, the total sum of squared distances between the observed feature points imagePoints and the projected (using the current estimates for camera parameters and the poses) object points objectPoints. Estimate the initial camera pose as if the intrinsic parameters have been already known. The distortion coefficients are all set to zeros initially unless some of CV_CALIB_FIX_K? are specified. Compute the initial intrinsic parameters (the option only available for planar calibration patterns) or read them from the input parameters. 3DCalibration rigs can also be used as long as initial cameraMatrix is provided.The algorithm performs the following steps: apertureWidth – Physical width of the sensor. imageSize – Input image size in pixels. cameraMatrix – Input camera matrix that can be estimated by calibrateCamera() or stereoCalibrate(). CalibrationMatrixValues (cameraMatrix, imageSize, apertureWidth, apertureHeight ) → fovx, fovy, focalLength, principalPoint, aspectRatio ¶ Parameters: C++: void calibrationMatrixValues (InputArray cameraMatrix, Size imageSize, double apertureWidth, double apertureHeight, double& fovx, double& fovy, double& focalLength, Point2d& principalPoint, double& aspectRatio ) ¶ Python: cv2. focalLength – Focal length of the lens in mm. fovy – Output field of view in degrees along the vertical sensor axis. fovx – Output field of view in degrees along the horizontal sensor axis. rvec3 – Output rotation vector of the superposition. ComposeRT (rvec1, tvec1, rvec2, tvec2 ] ] ] ] ] ] ] ] ] ) → rvec3, tvec3, dr3dr1, dr3dt1, dr3dr2, dr3dt2, dt3dr1, dt3dt1, dt3dr2, dt3dt2 ¶ Parameters: C++: void composeRT (InputArray rvec1, InputArray tvec1, InputArray rvec2, InputArray tvec2, OutputArray rvec3, OutputArray tvec3, OutputArray dr3dr1=noArray(), OutputArray dr3dt1=noArray(), OutputArray dr3dr2=noArray(), OutputArray dr3dt2=noArray(), OutputArray dt3dr1=noArray(), OutputArray dt3dt1=noArray(), OutputArray dt3dr2=noArray(), OutputArray dt3dt2=noArray() ) ¶ Python: cv2.

For every point in one of the two images of a stereo pair, the function finds the equation of theCorresponding epipolar line in the other image.From the fundamental matrix definition (seeIn the first image (when whichImage=1 ) is computed as:Decomposes a projection matrix into a rotation matrix and a camera matrix. Each line is encoded by 3 numbers. lines – Output vector of the epipolar lines corresponding to the points in the other image.

0 Comments

Leave a Reply. |

AuthorAlex ArchivesCategories |

RSS Feed

RSS Feed